A PC Benchmark

Validation Software

Measure the genuine performance of your system with professional quality.

Why BenchMate?

Download BenchMate 14.1

The following apps are bundled with BenchMate:

Installer Executable

Windows 10 or later

Manual Installation Archive

Windows 7, 8 and 8.1 only requires you to install BenchMate manually

Our Mission

If you have seen a PC benchmark world record, advertized performance numbers or casual benchmark results on the internet, chances are you just saw a single number, a chart or a screenshot. Is the number correct? Which Windows version was used? CPU temperature? Was the time correctly measured? Even if some additional information is provided, you have to trust the source for the validity of the result, because you simply have not enough data to understand, validate and reproduce it yourself.

A big improvement came with advent of automated result uploads to online platforms. The time-consuming and error-prone form submission could finally be done with a single click and an internet connection. But to this day only a few benchmarks (like our own GPUPI) provided this functionality. The implementation also depends heavily on the knowledge and maintenance of the benchmark developer and therefore varies in security, reliability and in the end quality. In addition all benchmarks need to be updated regularly to provide up-to-date hardware detection for proper submission. While this was an important step for benchmarking, it still does not provide a common denominator to compare results from different online platforms and rankings.

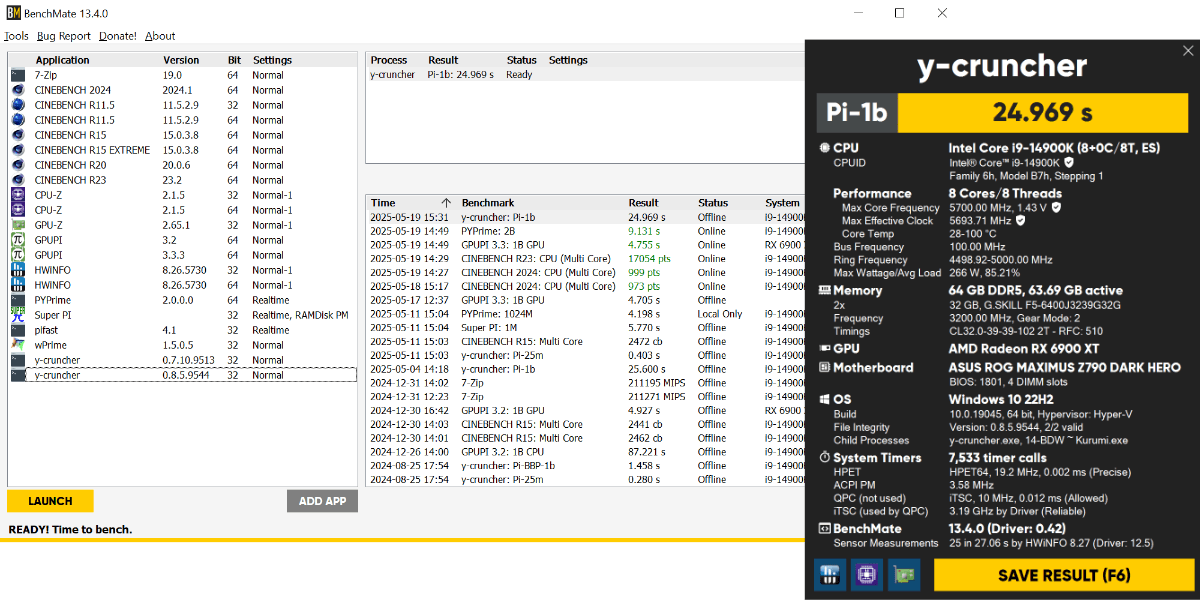

A well-considered minimum of security and timer reliability applicable to all benchmarks, though much more advanced than anything currently available in this industry. Benchmarks are guarded by a combination of a custom driver, two background services, the BenchMate launcher and client to protect against access with malicious intent as well as unknowingly skewed results.

BenchMate monitors the benchmark's process closely to enable precise time measurement provided by low-level access to the system's timer hardware. Every run is measured with up to three independent timers allowing a maximum deviance of only 0.01% and less than 10 milliseconds. We also validate all files and platform parameters for the benchmark and its workloads automatically to avoid tampering of executables, libraries and resource files. The benchmark executable, its executed machine code and the application's logic remains untouched throughout the whole process.

Last but not least BenchMate captures the result automatically before it is displayed by the benchmark and stores it in a secure location. Hence we can provide an easy and unified workflow even for unmaintained legacy benchmarks.

HWBOT, the world's biggest result database, has tackled these problems for many years to provide high-quality ranked results from various, technically diverse benchmarks. With an extensive, up-to-date rule book and an elaborate manual validation process, the moderators of HWBOT invest a lot of time to provide the overclocking community a fair playground for competitive benchmarking. Since June 2019 we have joined the fight and developed a new benchmarking workflow that directly integrates result submission to HWBOT.

Benchmarking is in a difficult spot

Benchmarking is in a difficult spot BenchMate is a common result standard

BenchMate is a common result standard Joining the fight for trustworthy benchmarking results

Joining the fight for trustworthy benchmarking results